Are you overpaying for brand-name LLMs?

Every few weeks, another closed-source, branded LLM launches - GPT-5.4 Pro. Claude Opus 4.5. Gemini 3.1 Pro - and so on. Each one comes with impressive benchmarks and a price tag to match, carrying the implicit message that top intelligence costs top dollar.

At The Grid, we study inference economics closely, tracking benchmarks, provider pricing, and how fragmented the supply side has become. We pulled five months of pricing data from OpenRouter [1], cross-referenced it with the Artificial Analysis Intelligence Index [2] and WhatLLM's quarterly benchmark comparisons [3], and the conclusion is straightforward: open-source models now match closed-source on nearly every benchmark, at a fraction of the cost. The brand premium is getting very hard to justify.

Open-source AI has caught closed-source on nearly every benchmark

A year ago, choosing an open-source model for production meant accepting a meaningful quality hit. That trade-off has mostly evaporated.

On MMLU, the single most referenced language model benchmark, the gap between the best open-source and best closed-source model went from 17.5 points in January 2025 to 0.3 points by January 2026 [4]. That's statistical noise.

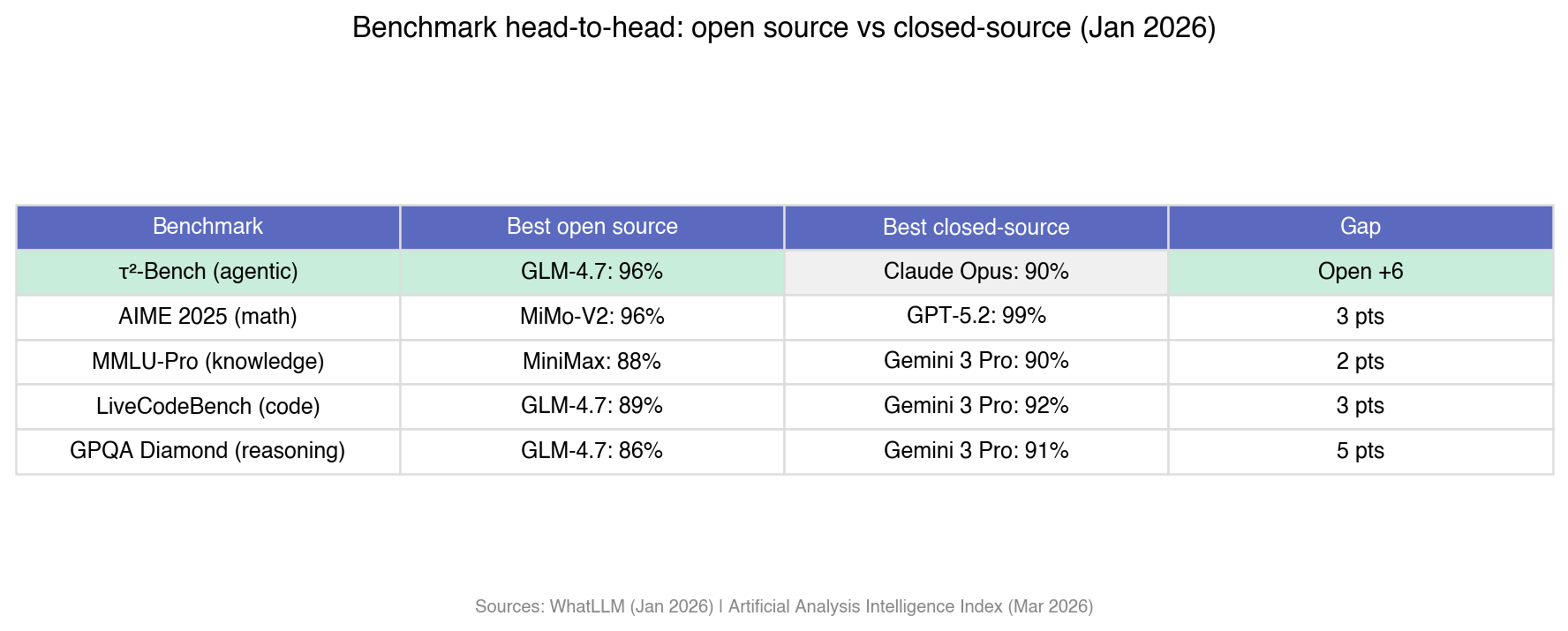

The Artificial Analysis Intelligence Index [2], a composite of 10 evaluations covering coding, reasoning, agentic tasks, knowledge, and instruction following, shows the top closed-source models (Gemini 3.1 Pro Preview, GPT-5.4) scoring 57. The top open-weights model (GLM-5) scores 50. Seven points on the composite. But the individual benchmarks tell a more interesting story, because open source is already winning on several of them.

On tau-squared-Bench, which measures agentic tool use, Z AI's GLM-4.7 scores 96% against Claude Opus 4.5 at 90% [3]. Six-point lead for open source, on the capability that will probably define the next wave of AI products.

AIME 2025 (math): Xiaomi's MiMo-V2-Flash hits 96%, tying Gemini 3 Pro [3]. MMLU-Pro (knowledge): MiniMax at 88% versus Gemini 3 Pro's 90%. LiveCodeBench (code): 89% versus 92%.

The only area where closed-source holds a comfortable lead is GPQA Diamond (graduate-level scientific reasoning) at 91% versus 86%. Even that narrows every quarter [3].

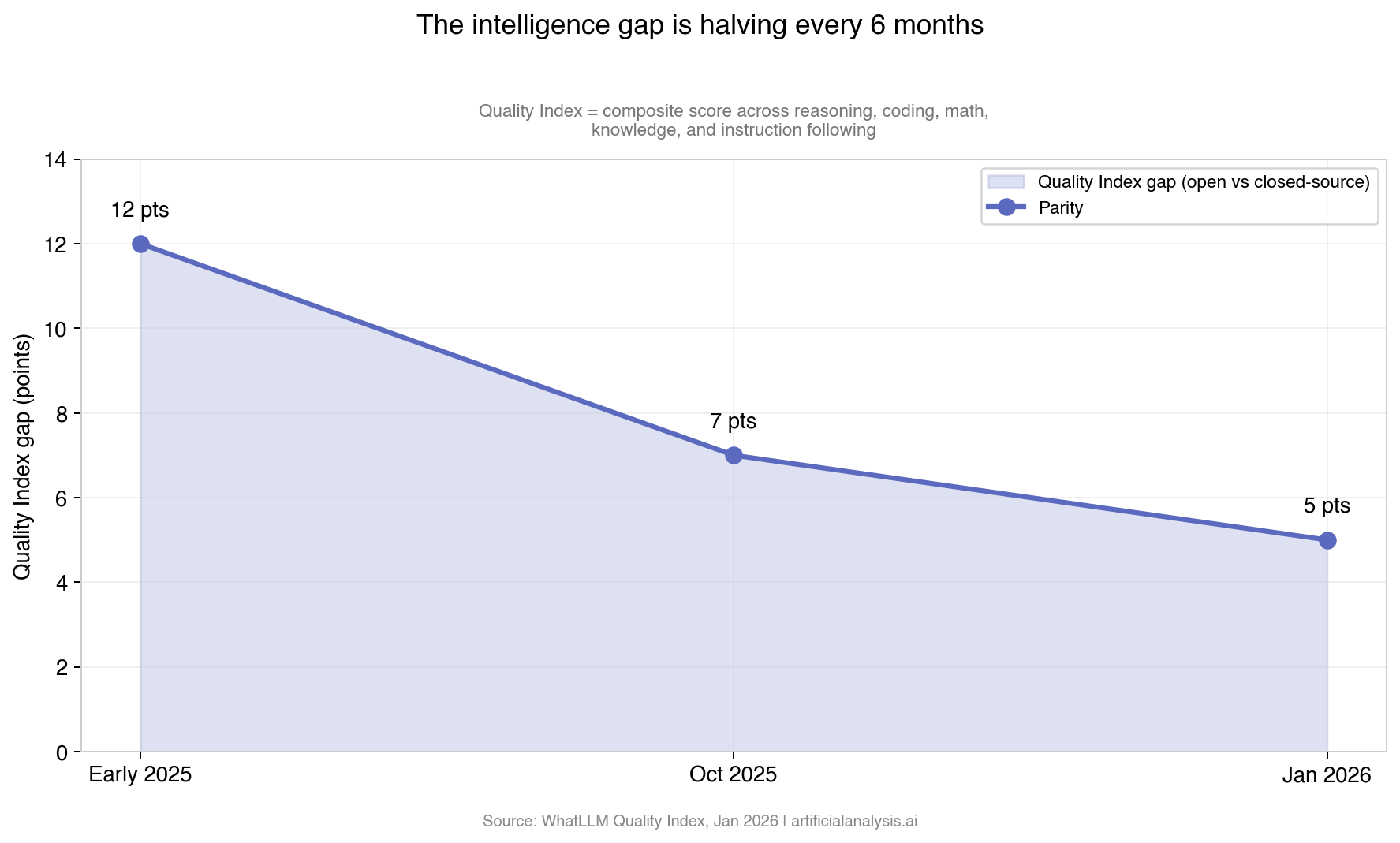

WhatLLM tracks what they call a Quality Index, a composite score that rolls up accuracy across reasoning, coding, math, knowledge, and instruction following for each model [3]. Over the past year, the gap between the best open and best closed-source model on that index went from 12 points in early 2025, to 7 by October, to 5 by January 2026. Halving roughly every six months.

What got us here? Billions in open-weight investment from Meta, Alibaba, DeepSeek, Z AI, and Mistral. Mixture-of-Experts architectures that let models punch above their active parameter count. Post-training techniques like RLHF and DPO that used to be closely guarded and are now published, open-sourced, and widely replicated [4].

The brand-name tax: 10–55x the price for single-digit performance gains

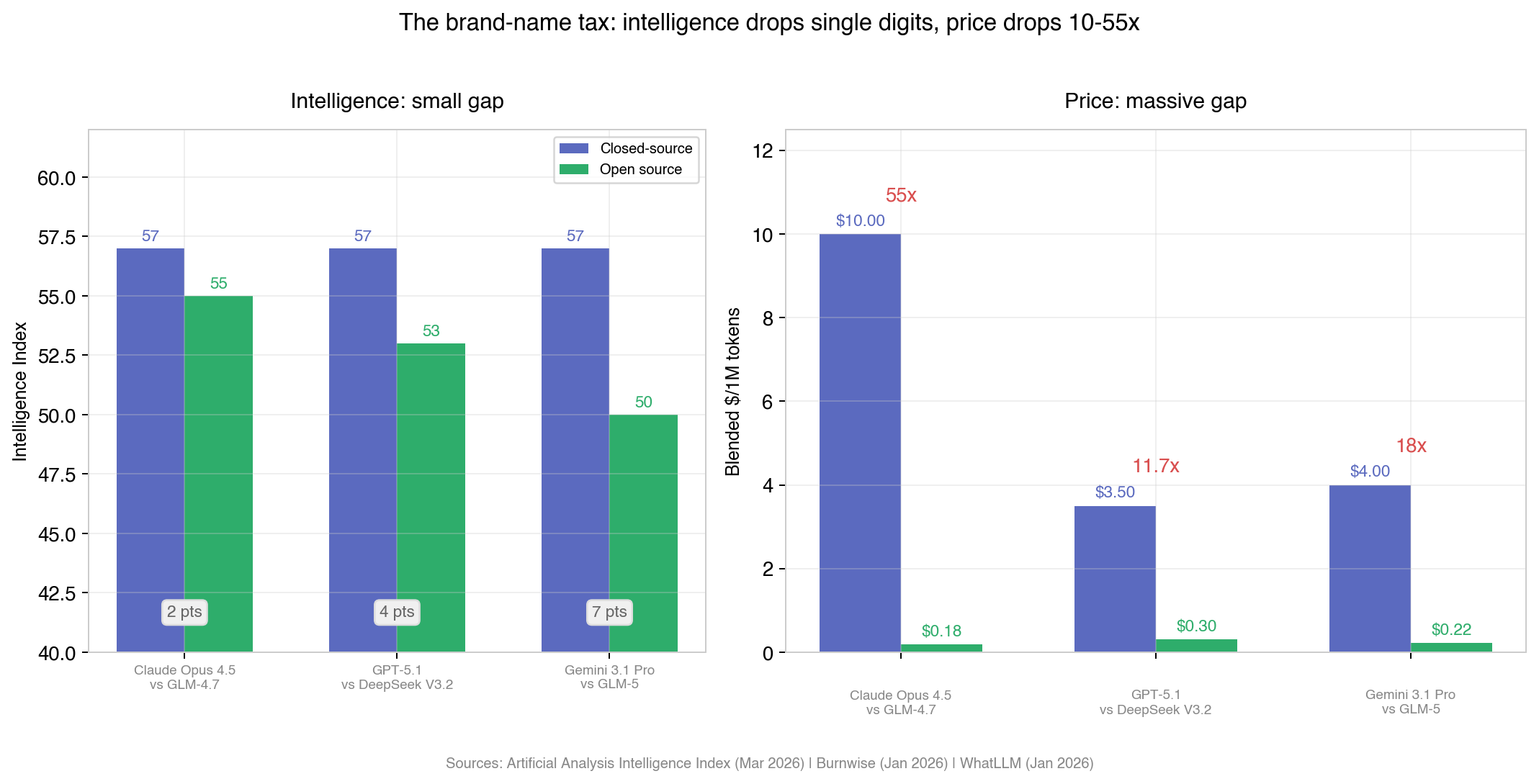

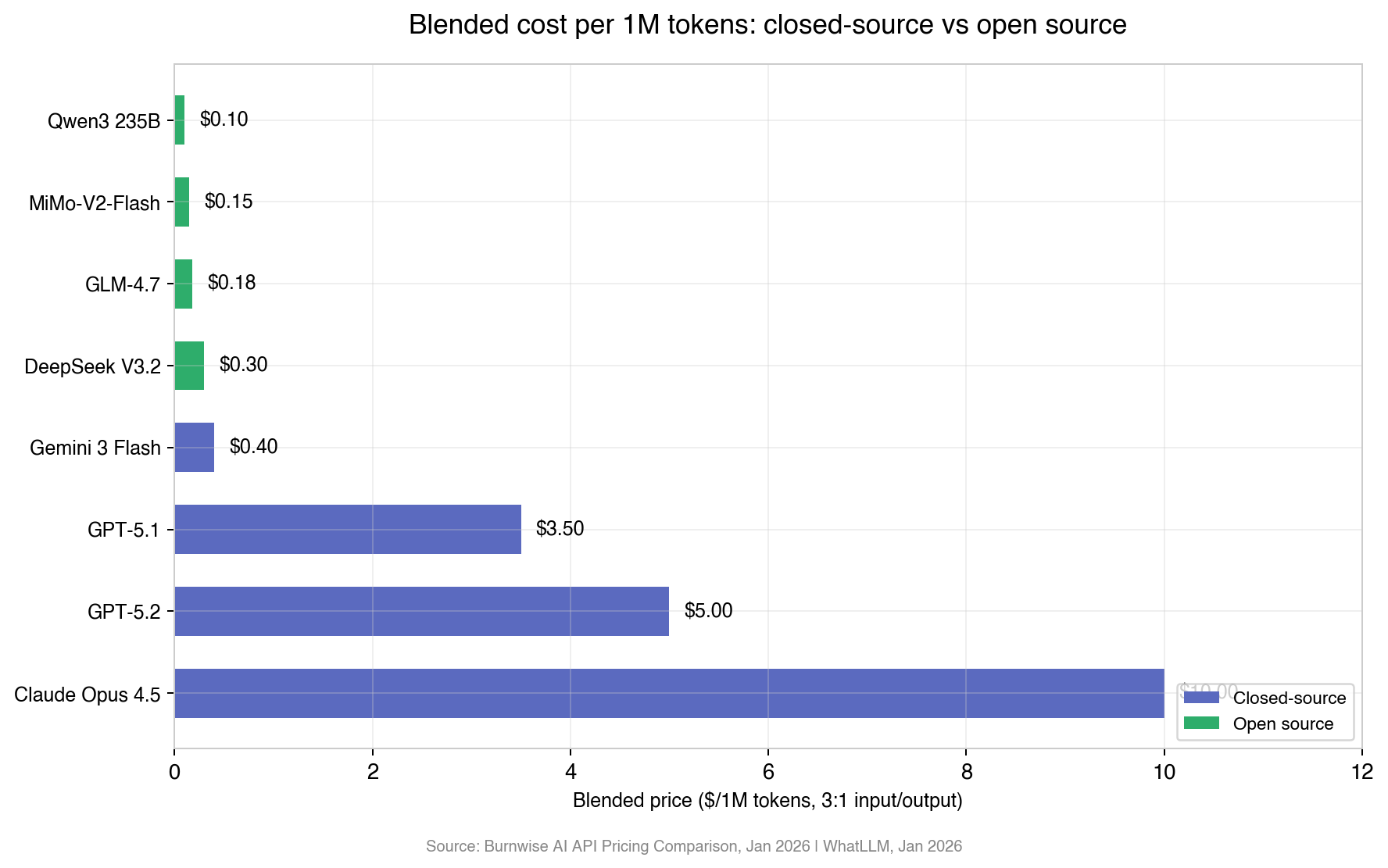

Take the three most capable closed-source models available today and match each one against its closest open-source competitor.

Claude Opus 4.5 costs 55 times more than GLM-4.7. The intelligence difference? Two points on a 73-point scale (based on Artificial Analysis’ Intelligence Index). GPT-5.1 costs nearly 12 times more than DeepSeek V3.2 for four points. Even the widest gap, Gemini 3.1 Pro versus GLM-5, is 18x more expensive for 7 points.

Prices drop by an order of magnitude. Intelligence drops by single digits.

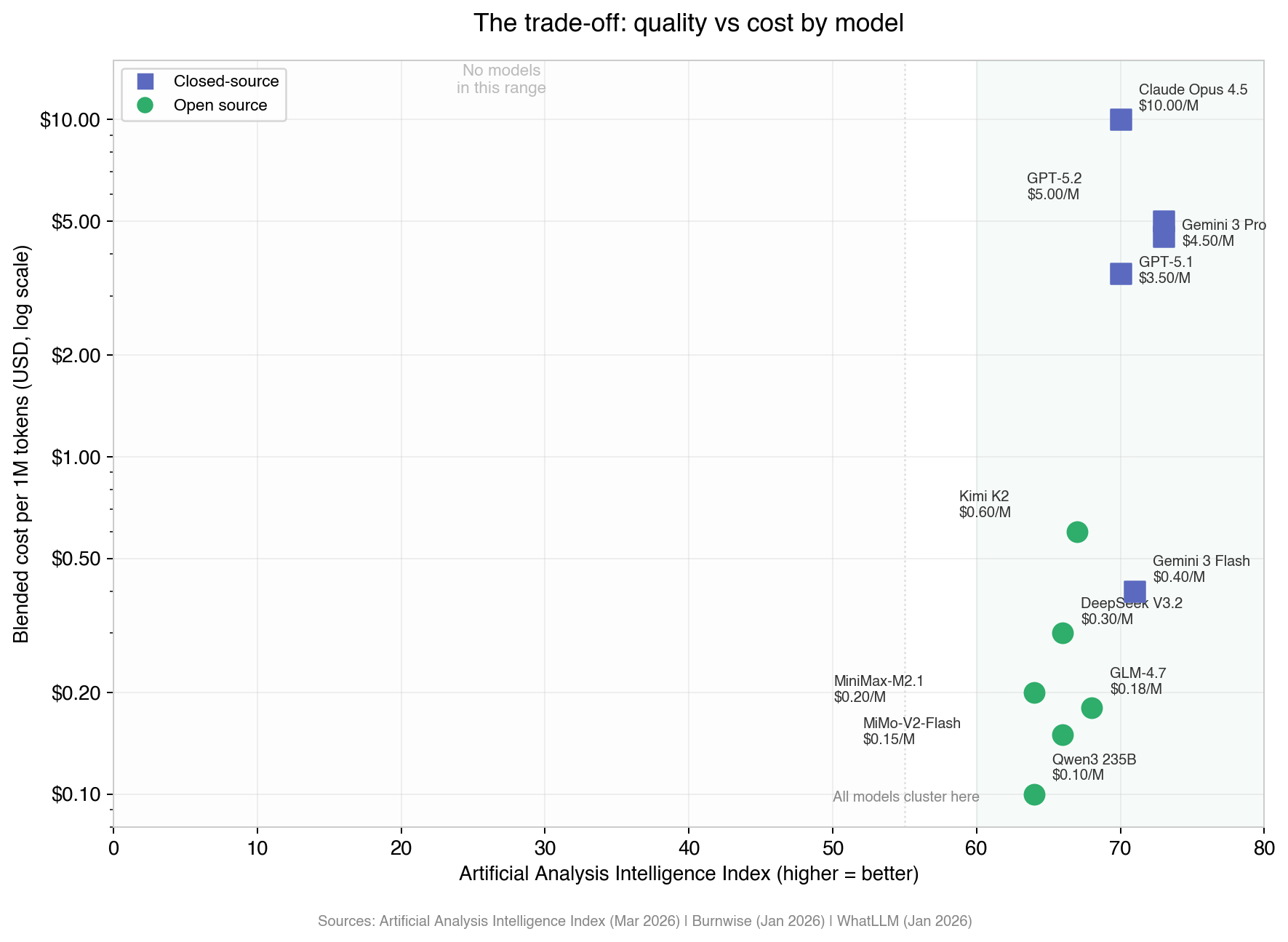

The scatterplot makes the trade-off visible.

The x-axis is the Artificial Analysis Intelligence Index (a composite of 10 evaluations), and every single model, open and closed source, clusters in the far right of the chart. They are all high performers. The vertical spread is where the story is: closed-source models sit at $3.50 to $10.00, while open-source alternatives with near-identical intelligence scores sit at $0.10 to $0.60. The gap between them is almost entirely price, not intelligence.

For frontier research or specialized scientific reasoning where those few points change outcomes, the premium might make sense. For chatbots, classification, extraction, summarization, code assistance, the workloads that account for the vast majority of production inference, your users will never notice the difference - but your finance team will.

Would you pay 55x more for 2 extra points of intelligence?

Candidly speaking, for most production workloads, it would rarely make sense to overpay by this magnitude.

The broader trend pushes the same direction. Capability-adjusted inference prices have fallen roughly 90% since 2023 [5]. Research using Artificial Analysis historical data puts the decline at 5-10x per year for a given performance level [6]. Despite all that compression, enterprise AI spending tripled to $37 billion in 2025, and the global inference market crossed $106 billion [7]. Turns out inference demand is deeply price-elastic. The cheaper it gets, the more people use it.

That flips the risk. Your exposure isn't quality loss from switching to open source. Your exposure is competitors matching your output at a fraction of the cost while you keep paying the brand premium.

Same model, but wildly different prices

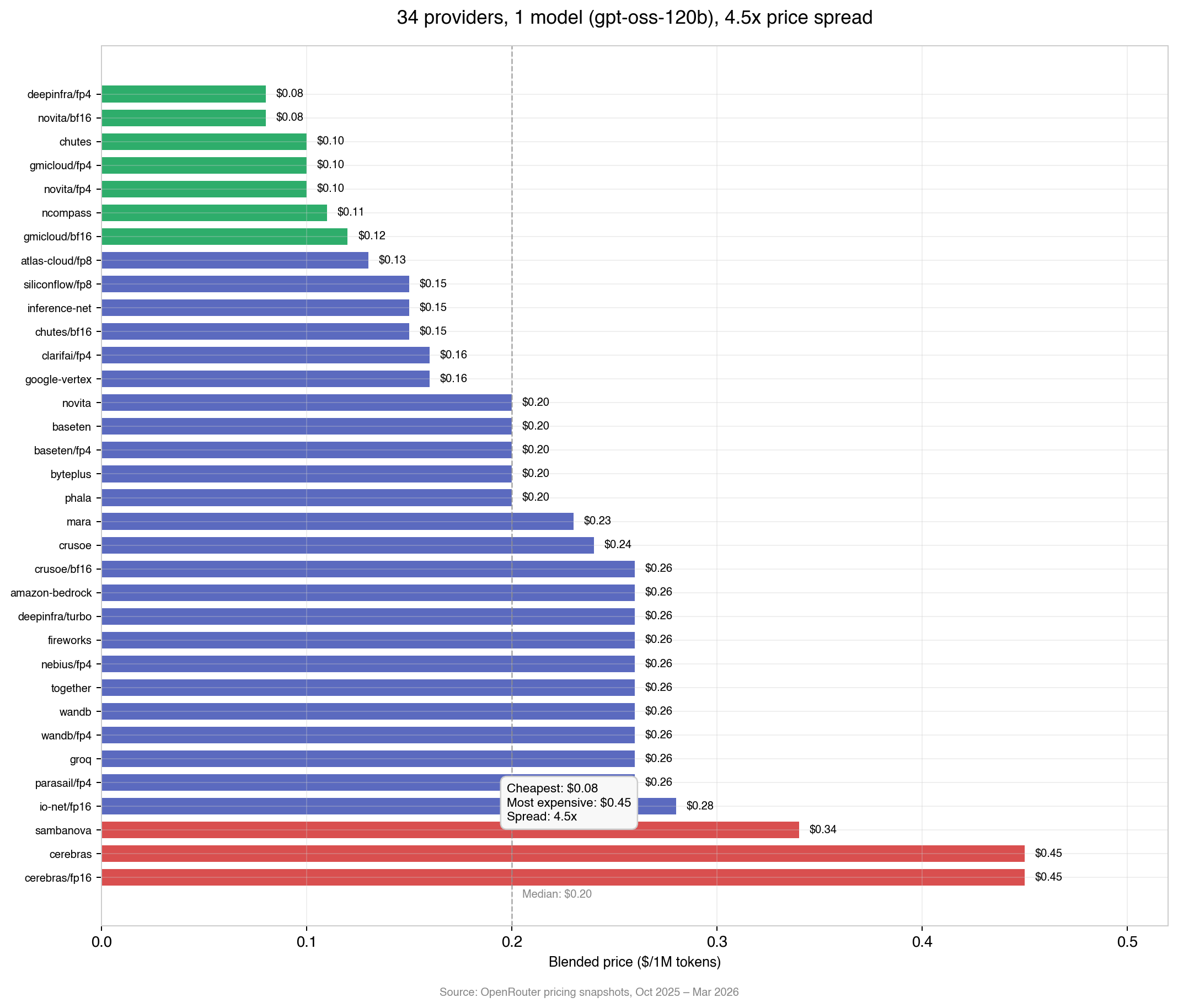

Zoom into a single model and the fragmentation gets even more striking.

We tracked five months of pricing snapshots (October 2025 through March 2026) for OpenAI's gpt-oss-120b, one open-source model hosted by 34 different inference providers [1]. Same weights, same architecture, same output quality across all of them. The only variable is price, and it ranges from $0.08 to $0.45 per million tokens. A 4.5x spread for identical output.

Several providers cut prices aggressively over this period: gmicloud dropped 61%, novita dropped 60%, Google Vertex dropped 38% [1]. The median barely moved, hovering around $0.20 the whole time. Fierce competition at the edges, sticky pricing in the middle, nothing forcing convergence between them.

If you're buying inference today, you're comparing dashboards across 34 providers with no centralized way to see who's cheapest right now.

And that's before you count total cost of ownership

Everything above is per-token pricing. Your actual inference spend includes model evaluation, migration, and ongoing monitoring, none of which show up on the API bill. Some teams spend as much engineering time chasing better models as they save by switching to them. With several providers today and more every quarter, that overhead only compounds.

The pattern is familiar. Electricity, natural gas, and cloud compute all went through the same phase: product standardizes, suppliers multiply, prices compress, and the friction of navigating it all becomes the real cost.

Eventually someone builds market infrastructure to fix that. That's what we're building at The Grid: standardized inference instruments that track performance tiers, not model names. Teams buy inference at the market price instead of managing models, providers, and pricing themselves.

A year from now, the benchmarks will be even closer and the open-source options even cheaper. The teams that recognize this early won't just cut costs, they'll ship faster and with more efficient token economics than competitors still paying the brand tax on every API call. The Grid is in early beta, and we're offering $25 in sign-up credit plus up to $60 of free inference every month for early users. Make your first request in <5 mins today!

Sources

[1] OpenRouter - https://openrouter.ai/

[2] Artificial Analysis Intelligence Index (March 2026) - https://artificialanalysis.ai/leaderboards/models

[3] WhatLLM, "January 2026: Open source vs proprietary LLMs compared" - https://whatllm.org/blog/january-2026-open-source-vs-proprietary

[4] Awesome Agents, "The Gap Between Open-Source and Proprietary AI Has Effectively Vanished" (February 2026) - https://awesomeagents.ai/news/open-source-vs-proprietary-gap-vanishes/

[5] Burnwise, "AI API Pricing Comparison 2026" (January 2026) - https://www.burnwise.io/blog/ai-api-pricing-comparison-2026

[6] Epoch AI, "Algorithmic progress in language models" (2025) - https://arxiv.org/pdf/2511.23455

[7] DataDrivenInvestor, "Who profits when AI models are free?" (February 2026) - https://medium.datadriveninvestor.com/who-profits-when-ai-models-are-free-b71ae03f4167

X article

Are you overpaying for brand-name LLMs?

Every few weeks, a new branded LLM is released (e.g., GPT-5.4 Pro, Claude Opus 4.5, Gemini 3.1 Pro), each offering incremental improvements, often at a higher price point.

Each comes with strong benchmarks and a price tag to match, carrying the implicit message that top intelligence costs top dollar.

At The Grid, we study inference economics closely, tracking benchmarks, provider pricing, and supply-side fragmentation.

We pulled five months of pricing data from OpenRouter, cross-referenced it with the Artificial Analysis Intelligence Index and WhatLLM benchmarks.

The conclusion is straightforward:

Open-source models now match closed-source on nearly every benchmark, at a fraction of the cost.

The brand premium is getting very hard to justify.

Open-source AI has caught closed-source

A year ago, choosing open-source meant accepting a real quality trade-off.

That trade-off has mostly evaporated.

On MMLU, the gap between the best open-source and closed-source model went from 17.5 points → 0.3 points in a year.

That’s statistical noise.

Across benchmarks:

- Agentic tool use → open source leads

- Math → tied

- Knowledge → ~2-point gap

- Code → ~3-point gap

The only clear lead left is GPQA (scientific reasoning). Even that is narrowing.

The broader trend is consistent:

- 12-point gap → early 2025

- 7 points → late 2025

- 5 points → Jan 2026

The gap is halving every ~6 months.

The brand-name tax

Now look at pricing.

Claude Opus 4.5 is ~55x more expensive than GLM-4.7, for a 2-point difference.

GPT-5.1 is ~12x more than DeepSeek V3.2, for 4 points.

Gemini 3.1 Pro is ~18x more than GLM-5, for 7 points.

Prices drop by an order of magnitude.

Intelligence drops by single digits.

There is a trade off

Plotting all models on a chart, you see a cluster tightly on performance, all of which are of high quality.

The spread is in price:

- Closed-source: ~$3.50–$10

- Open-source: ~$0.10–$0.60

The gap between them is almost entirely price.

For most production workloads (e.g., chatbots, extraction, summarization, code) users probably won’t event notice the difference.

Would you pay 55x more for 2 points?

For most use cases, it rarely makes sense.

The macro trend reinforces it:

- Inference costs down ~90% since 2023

- 5–10x yearly efficiency gains

- AI spend still tripled to $37B

Inference demand is deeply price-elastic.

The cheaper it gets, the more it’s used.

The real risk don’t lie with switching. It is, however, having competitors matching your output at a fraction of the cost while you keep paying the premium.

Same model, wildly different prices

Even for identical models.

We tracked one open-source model across 34 providers:

- Same weights

- Same output

- Prices: $0.08 → $0.45

A 4.5x spread for identical inference.

Providers are cutting aggressively, but pricing remains fragmented.

If you’re buying inference today, you’re manually comparing dashboards with no real-time price discovery.

And that’s before total cost

Per-token pricing is only part of it.

There’s also:

- Model evaluation

- Migration

- Ongoing monitoring

Some teams spend as much time managing models as they save switching to them.

As the market fragments, this overhead only grows.

AI inference is becoming a commodity

The pattern is familiar:

Product standardizes → suppliers multiply → prices compress → navigation becomes the real cost.

Electricity. Cloud. Bandwidth.

AI inference is following the same path.

The takeaway

A year from now:

- Benchmarks will be closer

- Open-source will be cheaper

- Supply will be more fragmented

Interested in learning how you could drop your cost while not compromising your performance?